Redis

Redis is an advanced key-value cache and store. It is often referred to as a data structure server since keys can contain strings, hashes, lists, sets, sorted sets, bitmaps and hyperloglogs.

TL;DR

# Testing configuration

$ helm repo add bitnami https://charts.bitnami.com/bitnami

$ helm install my-release bitnami/redis

# Production configuration

$ helm repo add bitnami https://charts.bitnami.com/bitnami

$ helm install my-release bitnami/redis --values values-production.yaml

Introduction

This chart bootstraps a Redis deployment on a Kubernetes cluster using the Helm package manager.

Bitnami charts can be used with Kubeapps for deployment and management of Helm Charts in clusters. This chart has been tested to work with NGINX Ingress, cert-manager, fluentd and Prometheus on top of the BKPR.

Choose between Redis Helm Chart and Redis Cluster Helm Chart

You can choose any of the two Redis Helm charts for deploying a Redis cluster. While Redis Helm Chart will deploy a master-slave cluster using Redis Sentinel, the Redis Cluster Helm Chart will deploy a Redis Cluster topology with sharding. The main features of each chart are the following:

| Redis | Redis Cluster |

|---|---|

| Supports multiple databases | Supports only one database. Better if you have a big dataset |

| Single write point (single master) | Multiple write points (multiple masters) |

|

|

Prerequisites

- Kubernetes 1.12+

- Helm 2.12+ or Helm 3.0-beta3+

- PV provisioner support in the underlying infrastructure

Installing the Chart

To install the chart with the release name my-release:

$ helm install my-release bitnami/redis

The command deploys Redis on the Kubernetes cluster in the default configuration. The Parameters section lists the parameters that can be configured during installation.

Tip

: List all releases using

helm list

Uninstalling the Chart

To uninstall/delete the my-release deployment:

$ helm delete my-release

The command removes all the Kubernetes components associated with the chart and deletes the release.

Parameters

The following table lists the configurable parameters of the Redis chart and their default values.

| Parameter | Description | Default |

|---|---|---|

global.imageRegistry |

Global Docker image registry | nil |

global.imagePullSecrets |

Global Docker registry secret names as an array | [] (does not add image pull secrets to deployed pods) |

global.storageClass |

Global storage class for dynamic provisioning | nil |

global.redis.password |

Redis password (overrides password) |

nil |

image.registry |

Redis Image registry | docker.io |

image.repository |

Redis Image name | bitnami/redis |

image.tag |

Redis Image tag | {TAG_NAME} |

image.pullPolicy |

Image pull policy | IfNotPresent |

image.pullSecrets |

Specify docker-registry secret names as an array | nil |

nameOverride |

String to partially override redis.fullname template with a string (will prepend the release name) | nil |

fullnameOverride |

String to fully override redis.fullname template with a string | nil |

cluster.enabled |

Use master-slave topology | true |

cluster.slaveCount |

Number of slaves | 2 |

existingSecret |

Name of existing secret object (for password authentication) | nil |

existingSecretPasswordKey |

Name of key containing password to be retrieved from the existing secret | nil |

usePassword |

Use password | true |

usePasswordFile |

Mount passwords as files instead of environment variables | false |

password |

Redis password (ignored if existingSecret set) | Randomly generated |

configmap |

Additional common Redis node configuration (this value is evaluated as a template) | See values.yaml |

clusterDomain |

Kubernetes DNS Domain name to use | cluster.local |

networkPolicy.enabled |

Enable NetworkPolicy | false |

networkPolicy.allowExternal |

Don't require client label for connections | true |

networkPolicy.ingressNSMatchLabels |

Allow connections from other namespaces | {} |

networkPolicy.ingressNSPodMatchLabels |

For other namespaces match by pod labels and namespace labels | {} |

securityContext.enabled |

Enable security context (both redis master and slave pods) | true |

securityContext.fsGroup |

Group ID for the container (both redis master and slave pods) | 1001 |

securityContext.runAsUser |

User ID for the container (both redis master and slave pods) | 1001 |

securityContext.sysctls |

Set namespaced sysctls for the container (both redis master and slave pods) | nil |

serviceAccount.create |

Specifies whether a ServiceAccount should be created | false |

serviceAccount.name |

The name of the ServiceAccount to create | Generated using the fullname template |

rbac.create |

Specifies whether RBAC resources should be created | false |

rbac.role.rules |

Rules to create | [] |

metrics.enabled |

Start a side-car prometheus exporter | false |

metrics.image.registry |

Redis exporter image registry | docker.io |

metrics.image.repository |

Redis exporter image name | bitnami/redis-exporter |

metrics.image.tag |

Redis exporter image tag | {TAG_NAME} |

metrics.image.pullPolicy |

Image pull policy | IfNotPresent |

metrics.image.pullSecrets |

Specify docker-registry secret names as an array | nil |

metrics.extraArgs |

Extra arguments for the binary; possible values here | {} |

metrics.podLabels |

Additional labels for Metrics exporter pod | {} |

metrics.podAnnotations |

Additional annotations for Metrics exporter pod | {} |

metrics.resources |

Exporter resource requests/limit | Memory: 256Mi, CPU: 100m |

metrics.serviceMonitor.enabled |

if true, creates a Prometheus Operator ServiceMonitor (also requires metrics.enabled to be true) |

false |

metrics.serviceMonitor.namespace |

Optional namespace which Prometheus is running in | nil |

metrics.serviceMonitor.interval |

How frequently to scrape metrics (use by default, falling back to Prometheus' default) | nil |

metrics.serviceMonitor.selector |

Default to kube-prometheus install (CoreOS recommended), but should be set according to Prometheus install | { prometheus: kube-prometheus } |

metrics.service.type |

Kubernetes Service type (redis metrics) | ClusterIP |

metrics.service.annotations |

Annotations for the services to monitor (redis master and redis slave service) | {} |

metrics.service.labels |

Additional labels for the metrics service | {} |

metrics.service.loadBalancerIP |

loadBalancerIP if redis metrics service type is LoadBalancer |

nil |

metrics.priorityClassName |

Metrics exporter pod priorityClassName | {} |

metrics.prometheusRule.enabled |

Set this to true to create prometheusRules for Prometheus operator | false |

metrics.prometheusRule.additionalLabels |

Additional labels that can be used so prometheusRules will be discovered by Prometheus | {} |

metrics.prometheusRule.namespace |

namespace where prometheusRules resource should be created | Same namespace as redis |

metrics.prometheusRule.rules |

rules to be created, check values for an example. | [] |

persistence.existingClaim |

Provide an existing PersistentVolumeClaim | nil |

master.persistence.enabled |

Use a PVC to persist data (master node) | true |

master.persistence.path |

Path to mount the volume at, to use other images | /data |

master.persistence.subPath |

Subdirectory of the volume to mount at | "" |

master.persistence.storageClass |

Storage class of backing PVC | generic |

master.persistence.accessModes |

Persistent Volume Access Modes | [ReadWriteOnce] |

master.persistence.size |

Size of data volume | 8Gi |

master.persistence.matchLabels |

matchLabels persistent volume selector | {} |

master.persistence.matchExpressions |

matchExpressions persistent volume selector | {} |

master.statefulset.updateStrategy |

Update strategy for StatefulSet | onDelete |

master.statefulset.rollingUpdatePartition |

Partition update strategy | nil |

master.podLabels |

Additional labels for Redis master pod | {} |

master.podAnnotations |

Additional annotations for Redis master pod | {} |

master.extraEnvVars |

Additional Environement Variables passed to the pod of the master's stateful set set | [] |

master.extraEnvVarCMs |

Additional Environement Variables ConfigMappassed to the pod of the master's stateful set set | [] |

master.extraEnvVarsSecret |

Additional Environement Variables Secret passed to the master's stateful set | [] |

podDisruptionBudget.enabled |

Pod Disruption Budget toggle | false |

podDisruptionBudget.minAvailable |

Minimum available pods | 1 |

podDisruptionBudget.maxUnavailable |

Maximum unavailable pods | nil |

redisPort |

Redis port (in both master and slaves) | 6379 |

tls.enabled |

Enable TLS support for replication traffic | false |

tls.authClients |

Require clients to authenticate or not | true |

tls.certificatesSecret |

Name of the secret that contains the certificates | nil |

tls.certFilename |

Certificate filename | nil |

tls.certKeyFilename |

Certificate key filename | nil |

tls.certCAFilename |

CA Certificate filename | nil |

tls.dhParamsFilename |

DH params (in order to support DH based ciphers) | nil |

master.command |

Redis master entrypoint string. The command redis-server is executed if this is not provided. Note this is prepended with exec |

/run.sh |

master.preExecCmds |

Text to inset into the startup script immediately prior to master.command. Use this if you need to run other ad-hoc commands as part of startup |

nil |

master.configmap |

Additional Redis configuration for the master nodes (this value is evaluated as a template) | nil |

master.disableCommands |

Array of Redis commands to disable (master) | ["FLUSHDB", "FLUSHALL"] |

master.extraFlags |

Redis master additional command line flags | [] |

master.nodeSelector |

Redis master Node labels for pod assignment | {"beta.kubernetes.io/arch": "amd64"} |

master.tolerations |

Toleration labels for Redis master pod assignment | [] |

master.affinity |

Affinity settings for Redis master pod assignment | {} |

master.schedulerName |

Name of an alternate scheduler | nil |

master.service.type |

Kubernetes Service type (redis master) | ClusterIP |

master.service.port |

Kubernetes Service port (redis master) | 6379 |

master.service.nodePort |

Kubernetes Service nodePort (redis master) | nil |

master.service.annotations |

annotations for redis master service | {} |

master.service.labels |

Additional labels for redis master service | {} |

master.service.loadBalancerIP |

loadBalancerIP if redis master service type is LoadBalancer |

nil |

master.service.loadBalancerSourceRanges |

loadBalancerSourceRanges if redis master service type is LoadBalancer |

nil |

master.resources |

Redis master CPU/Memory resource requests/limits | Memory: 256Mi, CPU: 100m |

master.livenessProbe.enabled |

Turn on and off liveness probe (redis master pod) | true |

master.livenessProbe.initialDelaySeconds |

Delay before liveness probe is initiated (redis master pod) | 5 |

master.livenessProbe.periodSeconds |

How often to perform the probe (redis master pod) | 5 |

master.livenessProbe.timeoutSeconds |

When the probe times out (redis master pod) | 5 |

master.livenessProbe.successThreshold |

Minimum consecutive successes for the probe to be considered successful after having failed (redis master pod) | 1 |

master.livenessProbe.failureThreshold |

Minimum consecutive failures for the probe to be considered failed after having succeeded. | 5 |

master.readinessProbe.enabled |

Turn on and off readiness probe (redis master pod) | true |

master.readinessProbe.initialDelaySeconds |

Delay before readiness probe is initiated (redis master pod) | 5 |

master.readinessProbe.periodSeconds |

How often to perform the probe (redis master pod) | 5 |

master.readinessProbe.timeoutSeconds |

When the probe times out (redis master pod) | 1 |

master.readinessProbe.successThreshold |

Minimum consecutive successes for the probe to be considered successful after having failed (redis master pod) | 1 |

master.readinessProbe.failureThreshold |

Minimum consecutive failures for the probe to be considered failed after having succeeded. | 5 |

master.shareProcessNamespace |

Redis Master pod shareProcessNamespace option. Enables /pause reap zombie PIDs. |

false |

master.priorityClassName |

Redis Master pod priorityClassName | {} |

volumePermissions.enabled |

Enable init container that changes volume permissions in the registry (for cases where the default k8s runAsUser and fsUser values do not work) |

false |

volumePermissions.image.registry |

Init container volume-permissions image registry | docker.io |

volumePermissions.image.repository |

Init container volume-permissions image name | bitnami/minideb |

volumePermissions.image.tag |

Init container volume-permissions image tag | buster |

volumePermissions.image.pullPolicy |

Init container volume-permissions image pull policy | Always |

volumePermissions.resources |

Init container volume-permissions CPU/Memory resource requests/limits | {} |

slave.service.type |

Kubernetes Service type (redis slave) | ClusterIP |

slave.service.nodePort |

Kubernetes Service nodePort (redis slave) | nil |

slave.service.annotations |

annotations for redis slave service | {} |

slave.service.labels |

Additional labels for redis slave service | {} |

slave.service.port |

Kubernetes Service port (redis slave) | 6379 |

slave.service.loadBalancerIP |

LoadBalancerIP if Redis slave service type is LoadBalancer |

nil |

slave.service.loadBalancerSourceRanges |

loadBalancerSourceRanges if Redis slave service type is LoadBalancer |

nil |

slave.command |

Redis slave entrypoint string. The command redis-server is executed if this is not provided. Note this is prepended with exec |

/run.sh |

slave.preExecCmds |

Text to inset into the startup script immediately prior to slave.command. Use this if you need to run other ad-hoc commands as part of startup |

nil |

slave.configmap |

Additional Redis configuration for the slave nodes (this value is evaluated as a template) | nil |

slave.disableCommands |

Array of Redis commands to disable (slave) | [FLUSHDB, FLUSHALL] |

slave.extraFlags |

Redis slave additional command line flags | [] |

slave.livenessProbe.enabled |

Turn on and off liveness probe (redis slave pod) | true |

slave.livenessProbe.initialDelaySeconds |

Delay before liveness probe is initiated (redis slave pod) | 5 |

slave.livenessProbe.periodSeconds |

How often to perform the probe (redis slave pod) | 5 |

slave.livenessProbe.timeoutSeconds |

When the probe times out (redis slave pod) | 5 |

slave.livenessProbe.successThreshold |

Minimum consecutive successes for the probe to be considered successful after having failed (redis slave pod) | 1 |

slave.livenessProbe.failureThreshold |

Minimum consecutive failures for the probe to be considered failed after having succeeded. | 5 |

slave.readinessProbe.enabled |

Turn on and off slave.readiness probe (redis slave pod) | true |

slave.readinessProbe.initialDelaySeconds |

Delay before slave.readiness probe is initiated (redis slave pod) | 5 |

slave.readinessProbe.periodSeconds |

How often to perform the probe (redis slave pod) | 5 |

slave.readinessProbe.timeoutSeconds |

When the probe times out (redis slave pod) | 1 |

slave.readinessProbe.successThreshold |

Minimum consecutive successes for the probe to be considered successful after having failed (redis slave pod) | 1 |

slave.readinessProbe.failureThreshold |

Minimum consecutive failures for the probe to be considered failed after having succeeded. (redis slave pod) | 5 |

slave.shareProcessNamespace |

Redis slave pod shareProcessNamespace option. Enables /pause reap zombie PIDs. |

false |

slave.persistence.enabled |

Use a PVC to persist data (slave node) | true |

slave.persistence.path |

Path to mount the volume at, to use other images | /data |

slave.persistence.subPath |

Subdirectory of the volume to mount at | "" |

slave.persistence.storageClass |

Storage class of backing PVC | generic |

slave.persistence.accessModes |

Persistent Volume Access Modes | [ReadWriteOnce] |

slave.persistence.size |

Size of data volume | 8Gi |

slave.persistence.matchLabels |

matchLabels persistent volume selector | {} |

slave.persistence.matchExpressions |

matchExpressions persistent volume selector | {} |

slave.statefulset.updateStrategy |

Update strategy for StatefulSet | onDelete |

slave.statefulset.rollingUpdatePartition |

Partition update strategy | nil |

slave.extraEnvVars |

Additional Environement Variables passed to the pod of the slave's stateful set set | [] |

slave.extraEnvVarCMs |

Additional Environement Variables ConfigMappassed to the pod of the slave's stateful set set | [] |

masslaveter.extraEnvVarsSecret |

Additional Environement Variables Secret passed to the slave's stateful set | [] |

slave.podLabels |

Additional labels for Redis slave pod | master.podLabels |

slave.podAnnotations |

Additional annotations for Redis slave pod | master.podAnnotations |

slave.schedulerName |

Name of an alternate scheduler | nil |

slave.resources |

Redis slave CPU/Memory resource requests/limits | {} |

slave.affinity |

Enable node/pod affinity for slaves | {} |

slave.tolerations |

Toleration labels for Redis slave pod assignment | [] |

slave.spreadConstraints |

Topology Spread Constraints for Redis slave pod | {} |

slave.priorityClassName |

Redis Slave pod priorityClassName | {} |

sentinel.enabled |

Enable sentinel containers | false |

sentinel.usePassword |

Use password for sentinel containers | true |

sentinel.masterSet |

Name of the sentinel master set | mymaster |

sentinel.initialCheckTimeout |

Timeout for querying the redis sentinel service for the active sentinel list | 5 |

sentinel.quorum |

Quorum for electing a new master | 2 |

sentinel.downAfterMilliseconds |

Timeout for detecting a Redis node is down | 60000 |

sentinel.failoverTimeout |

Timeout for performing a election failover | 18000 |

sentinel.parallelSyncs |

Number of parallel syncs in the cluster | 1 |

sentinel.port |

Redis Sentinel port | 26379 |

sentinel.configmap |

Additional Redis configuration for the sentinel nodes (this value is evaluated as a template) | nil |

sentinel.staticID |

Enable static IDs for sentinel replicas (If disabled IDs will be randomly generated on startup) | false |

sentinel.service.type |

Kubernetes Service type (redis sentinel) | ClusterIP |

sentinel.service.nodePort |

Kubernetes Service nodePort (redis sentinel) | nil |

sentinel.service.annotations |

annotations for redis sentinel service | {} |

sentinel.service.labels |

Additional labels for redis sentinel service | {} |

sentinel.service.redisPort |

Kubernetes Service port for Redis read only operations | 6379 |

sentinel.service.sentinelPort |

Kubernetes Service port for Redis sentinel | 26379 |

sentinel.service.redisNodePort |

Kubernetes Service node port for Redis read only operations | `` |

sentinel.service.sentinelNodePort |

Kubernetes Service node port for Redis sentinel | `` |

sentinel.service.loadBalancerIP |

LoadBalancerIP if Redis sentinel service type is LoadBalancer |

nil |

sentinel.livenessProbe.enabled |

Turn on and off liveness probe (redis sentinel pod) | true |

sentinel.livenessProbe.initialDelaySeconds |

Delay before liveness probe is initiated (redis sentinel pod) | 5 |

sentinel.livenessProbe.periodSeconds |

How often to perform the probe (redis sentinel container) | 5 |

sentinel.livenessProbe.timeoutSeconds |

When the probe times out (redis sentinel container) | 5 |

sentinel.livenessProbe.successThreshold |

Minimum consecutive successes for the probe to be considered successful after having failed (redis sentinel container) | 1 |

sentinel.livenessProbe.failureThreshold |

Minimum consecutive failures for the probe to be considered failed after having succeeded. | 5 |

sentinel.readinessProbe.enabled |

Turn on and off sentinel.readiness probe (redis sentinel pod) | true |

sentinel.readinessProbe.initialDelaySeconds |

Delay before sentinel.readiness probe is initiated (redis sentinel pod) | 5 |

sentinel.readinessProbe.periodSeconds |

How often to perform the probe (redis sentinel pod) | 5 |

sentinel.readinessProbe.timeoutSeconds |

When the probe times out (redis sentinel container) | 1 |

sentinel.readinessProbe.successThreshold |

Minimum consecutive successes for the probe to be considered successful after having failed (redis sentinel container) | 1 |

sentinel.readinessProbe.failureThreshold |

Minimum consecutive failures for the probe to be considered failed after having succeeded. (redis sentinel container) | 5 |

sentinel.resources |

Redis sentinel CPU/Memory resource requests/limits | {} |

sentinel.image.registry |

Redis Sentinel Image registry | docker.io |

sentinel.image.repository |

Redis Sentinel Image name | bitnami/redis-sentinel |

sentinel.image.tag |

Redis Sentinel Image tag | {TAG_NAME} |

sentinel.image.pullPolicy |

Image pull policy | IfNotPresent |

sentinel.image.pullSecrets |

Specify docker-registry secret names as an array | nil |

sysctlImage.enabled |

Enable an init container to modify Kernel settings | false |

sysctlImage.command |

sysctlImage command to execute | [] |

sysctlImage.registry |

sysctlImage Init container registry | docker.io |

sysctlImage.repository |

sysctlImage Init container name | bitnami/minideb |

sysctlImage.tag |

sysctlImage Init container tag | buster |

sysctlImage.pullPolicy |

sysctlImage Init container pull policy | Always |

sysctlImage.mountHostSys |

Mount the host /sys folder to /host-sys |

false |

sysctlImage.resources |

sysctlImage Init container CPU/Memory resource requests/limits | {} |

podSecurityPolicy.create |

Specifies whether a PodSecurityPolicy should be created | false |

Specify each parameter using the --set key=value[,key=value] argument to helm install. For example,

$ helm install my-release \

--set password=secretpassword \

bitnami/redis

The above command sets the Redis server password to secretpassword.

Alternatively, a YAML file that specifies the values for the parameters can be provided while installing the chart. For example,

$ helm install my-release -f values.yaml bitnami/redis

Tip

: You can use the default values.yaml

Note for minikube users: Current versions of minikube (v0.24.1 at the time of writing) provision

hostPathpersistent volumes that are only writable by root. Using chart defaults cause pod failure for the Redis pod as it attempts to write to the/bitnamidirectory. Consider installing Redis with--set persistence.enabled=false. See minikube issue 1990 for more information.

Configuration and installation details

Rolling VS Immutable tags

It is strongly recommended to use immutable tags in a production environment. This ensures your deployment does not change automatically if the same tag is updated with a different image.

Bitnami will release a new chart updating its containers if a new version of the main container, significant changes, or critical vulnerabilities exist.

Production configuration

This chart includes a values-production.yaml file where you can find some parameters oriented to production configuration in comparison to the regular values.yaml. You can use this file instead of the default one.

- Number of slaves:

- cluster.slaveCount: 2

+ cluster.slaveCount: 3

- Enable NetworkPolicy:

- networkPolicy.enabled: false

+ networkPolicy.enabled: true

- Start a side-car prometheus exporter:

- metrics.enabled: false

+ metrics.enabled: true

Change Redis version

To modify the Redis version used in this chart you can specify a valid image tag using the image.tag parameter. For example, image.tag=X.Y.Z. This approach is also applicable to other images like exporters.

Cluster topologies

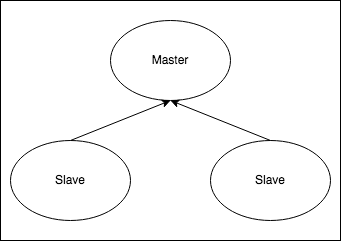

Default: Master-Slave

When installing the chart with cluster.enabled=true, it will deploy a Redis master StatefulSet (only one master node allowed) and a Redis slave StatefulSet. The slaves will be read-replicas of the master. Two services will be exposed:

- Redis Master service: Points to the master, where read-write operations can be performed

- Redis Slave service: Points to the slaves, where only read operations are allowed.

In case the master crashes, the slaves will wait until the master node is respawned again by the Kubernetes Controller Manager.

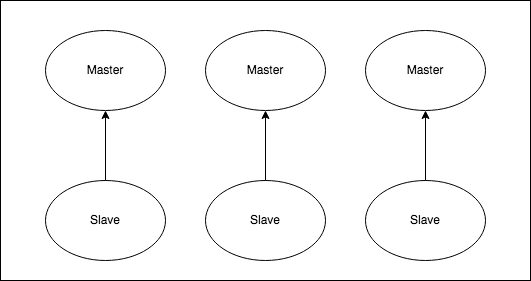

Master-Slave with Sentinel

When installing the chart with cluster.enabled=true and sentinel.enabled=true, it will deploy a Redis master StatefulSet (only one master allowed) and a Redis slave StatefulSet. In this case, the pods will contain an extra container with Redis Sentinel. This container will form a cluster of Redis Sentinel nodes, which will promote a new master in case the actual one fails. In addition to this, only one service is exposed:

- Redis service: Exposes port 6379 for Redis read-only operations and port 26379 for accesing Redis Sentinel.

For read-only operations, access the service using port 6379. For write operations, it's necessary to access the Redis Sentinel cluster and query the current master using the command below (using redis-cli or similar:

SENTINEL get-master-addr-by-name <name of your MasterSet. Example: mymaster>

This command will return the address of the current master, which can be accessed from inside the cluster.

In case the current master crashes, the Sentinel containers will elect a new master node.

Using password file

To use a password file for Redis you need to create a secret containing the password.

Note

: It is important that the file with the password must be called

redis-password

And then deploy the Helm Chart using the secret name as parameter:

usePassword=true

usePasswordFile=true

existingSecret=redis-password-file

sentinels.enabled=true

metrics.enabled=true

Securing traffic using TLS

TLS support can be enabled in the chart by specifying the tls. parameters while creating a release. The following parameters should be configured to properly enable the TLS support in the chart:

tls.enabled: Enable TLS support. Defaults tofalsetls.certificatesSecret: Name of the secret that contains the certificates. No defaults.tls.certFilename: Certificate filename. No defaults.tls.certKeyFilename: Certificate key filename. No defaults.tls.certCAFilename: CA Certificate filename. No defaults.

For example:

First, create the secret with the cetificates files:

kubectl create secret generic certificates-tls-secret --from-file=./cert.pem --from-file=./cert.key --from-file=./ca.pem

Then, use the following parameters:

tls.enabled="true"

tls.certificatesSecret="certificates-tls-secret"

tls.certFilename="cert.pem"

tls.certKeyFilename="cert.key"

tls.certCAFilename="ca.pem"

Note TLS and Prometheus Metrics: Current version of Redis Metrics Exporter (v1.6.1 at the time of writing) does not fully support the use of TLS. By enabling both features, the metric reporting pod is likely to not work as expected. See Redis Metrics Exporter issue 387 for more information.

Metrics

The chart optionally can start a metrics exporter for prometheus. The metrics endpoint (port 9121) is exposed in the service. Metrics can be scraped from within the cluster using something similar as the described in the example Prometheus scrape configuration. If metrics are to be scraped from outside the cluster, the Kubernetes API proxy can be utilized to access the endpoint.

Host Kernel Settings

Redis may require some changes in the kernel of the host machine to work as expected, in particular increasing the somaxconn value and disabling transparent huge pages.

To do so, you can set up a privileged initContainer with the sysctlImage config values, for example:

sysctlImage:

enabled: true

mountHostSys: true

command:

- /bin/sh

- -c

- |-

install_packages procps

sysctl -w net.core.somaxconn=10000

echo never > /host-sys/kernel/mm/transparent_hugepage/enabled

Alternatively, for Kubernetes 1.12+ you can set securityContext.sysctls which will configure sysctls for master and slave pods. Example:

securityContext:

sysctls:

- name: net.core.somaxconn

value: "10000"

Note that this will not disable transparent huge tables.

Persistence

By default, the chart mounts a Persistent Volume at the /data path. The volume is created using dynamic volume provisioning. If a Persistent Volume Claim already exists, specify it during installation.

Existing PersistentVolumeClaim

- Create the PersistentVolume

- Create the PersistentVolumeClaim

- Install the chart

$ helm install my-release --set persistence.existingClaim=PVC_NAME bitnami/redis

Backup and restore

Backup

To perform a backup you will need to connect to one of the nodes and execute:

$ kubectl exec -it my-redis-master-0 bash

$ redis-cli

127.0.0.1:6379> auth your_current_redis_password

OK

127.0.0.1:6379> save

OK

Then you will need to get the created dump file form the redis node:

$ kubectl cp my-redis-master-0:/data/dump.rdb dump.rdb -c redis

Restore

To restore in a new cluster, you will need to change a parameter in the redis.conf file and then upload the dump.rdb to the volume.

Follow the following steps:

- First you will need to set in the

values.yamlthe parameterappendonlytono, if it is alreadynoyou can skip this step.

configmap: |-

# Enable AOF https://redis.io/topics/persistence#append-only-file

appendonly no

# Disable RDB persistence, AOF persistence already enabled.

save ""

- Start the new cluster to create the PVCs.

For example, :

helm install new-redis -f values.yaml . --set cluster.enabled=true --set cluster.slaveCount=3

- Now that the PVC were created, stop it and copy the

dump.rdpon the persisted data by using a helping pod.

$ helm delete new-redis

$ kubectl run --generator=run-pod/v1 -i --rm --tty volpod --overrides='

{

"apiVersion": "v1",

"kind": "Pod",

"metadata": {

"name": "redisvolpod"

},

"spec": {

"containers": [{

"command": [

"tail",

"-f",

"/dev/null"

],

"image": "bitnami/minideb",

"name": "mycontainer",

"volumeMounts": [{

"mountPath": "/mnt",

"name": "redisdata"

}]

}],

"restartPolicy": "Never",

"volumes": [{

"name": "redisdata",

"persistentVolumeClaim": {

"claimName": "redis-data-new-redis-master-0"

}

}]

}

}' --image="bitnami/minideb"

$ kubectl cp dump.rdb redisvolpod:/mnt/dump.rdb

$ kubectl delete pod volpod

- Start again the cluster:

helm install new-redis -f values.yaml . --set cluster.enabled=true --set cluster.slaveCount=3

NetworkPolicy

To enable network policy for Redis, install

a networking plugin that implements the Kubernetes NetworkPolicy spec,

and set networkPolicy.enabled to true.

For Kubernetes v1.5 & v1.6, you must also turn on NetworkPolicy by setting the DefaultDeny namespace annotation. Note: this will enforce policy for all pods in the namespace:

kubectl annotate namespace default "net.beta.kubernetes.io/network-policy={\"ingress\":{\"isolation\":\"DefaultDeny\"}}"

With NetworkPolicy enabled, only pods with the generated client label will be able to connect to Redis. This label will be displayed in the output after a successful install.

With networkPolicy.ingressNSMatchLabels pods from other namespaces can connect to redis. Set networkPolicy.ingressNSPodMatchLabels to match pod labels in matched namespace. For example, for a namespace labeled redis=external and pods in that namespace labeled redis-client=true the fields should be set:

networkPolicy:

enabled: true

ingressNSMatchLabels:

redis: external

ingressNSPodMatchLabels:

redis-client: true

Upgrading an existing Release to a new major version

A major chart version change (like v1.2.3 -> v2.0.0) indicates that there is an incompatible breaking change needing manual actions.

To 11.0.0

When using sentinel, a new statefulset called -node was introduced. This will break upgrading from a previous version where the statefulsets are called master and slave. Hence the PVC will not match the new naming and won't be reused. If you want to keep your data, you will need to perform a backup and then a restore the data in this new version.

To 10.0.0

For releases with usePassword: true, the value sentinel.usePassword controls whether the password authentication also applies to the sentinel port. This defaults to true for a secure configuration, however it is possible to disable to account for the following cases:

- Using a version of redis-sentinel prior to

5.0.1where the authentication feature was introduced. - Where redis clients need to be updated to support sentinel authentication.

If using a master/slave topology, or with usePassword: false, no action is required.

To 8.0.18

For releases with metrics.enabled: true the default tag for the exporter image is now v1.x.x. This introduces many changes including metrics names. You'll want to use this dashboard now. Please see the redis_exporter github page for more details.

To 7.0.0

This version causes a change in the Redis Master StatefulSet definition, so the command helm upgrade would not work out of the box. As an alternative, one of the following could be done:

- Recommended: Create a clone of the Redis Master PVC (for example, using projects like this one). Then launch a fresh release reusing this cloned PVC.

helm install my-release bitnami/redis --set persistence.existingClaim=<NEW PVC>

- Alternative (not recommended, do at your own risk):

helm delete --purgedoes not remove the PVC assigned to the Redis Master StatefulSet. As a consequence, the following commands can be done to upgrade the release

helm delete --purge <RELEASE>

helm install <RELEASE> bitnami/redis

Previous versions of the chart were not using persistence in the slaves, so this upgrade would add it to them. Another important change is that no values are inherited from master to slaves. For example, in 6.0.0 slaves.readinessProbe.periodSeconds, if empty, would be set to master.readinessProbe.periodSeconds. This approach lacked transparency and was difficult to maintain. From now on, all the slave parameters must be configured just as it is done with the masters.

Some values have changed as well:

master.portandslave.porthave been changed toredisPort(same value for both master and slaves)master.securityContextandslave.securityContexthave been changed tosecurityContext(same values for both master and slaves)

By default, the upgrade will not change the cluster topology. In case you want to use Redis Sentinel, you must explicitly set sentinel.enabled to true.

To 6.0.0

Previous versions of the chart were using an init-container to change the permissions of the volumes. This was done in case the securityContext directive in the template was not enough for that (for example, with cephFS). In this new version of the chart, this container is disabled by default (which should not affect most of the deployments). If your installation still requires that init container, execute helm upgrade with the --set volumePermissions.enabled=true.

To 5.0.0

The default image in this release may be switched out for any image containing the redis-server

and redis-cli binaries. If redis-server is not the default image ENTRYPOINT, master.command

must be specified.

Breaking changes

master.argsandslave.argsare removed. Usemaster.commandorslave.commandinstead in order to override the image entrypoint, ormaster.extraFlagsto pass additional flags toredis-server.disableCommandsis now interpreted as an array of strings instead of a string of comma separated values.master.persistence.pathnow defaults to/data.

4.0.0

This version removes the chart label from the spec.selector.matchLabels

which is immutable since StatefulSet apps/v1beta2. It has been inadvertently

added, causing any subsequent upgrade to fail. See https://github.com/helm/charts/issues/7726.

It also fixes https://github.com/helm/charts/issues/7726 where a deployment extensions/v1beta1 can not be upgraded if spec.selector is not explicitly set.

Finally, it fixes https://github.com/helm/charts/issues/7803 by removing mutable labels in spec.VolumeClaimTemplate.metadata.labels so that it is upgradable.

In order to upgrade, delete the Redis StatefulSet before upgrading:

$ kubectl delete statefulsets.apps --cascade=false my-release-redis-master

And edit the Redis slave (and metrics if enabled) deployment:

kubectl patch deployments my-release-redis-slave --type=json -p='[{"op": "remove", "path": "/spec/selector/matchLabels/chart"}]'

kubectl patch deployments my-release-redis-metrics --type=json -p='[{"op": "remove", "path": "/spec/selector/matchLabels/chart"}]'

Notable changes

11.0.0

When deployed with sentinel enabled, only a group of nodes is deployed and the master/slave role is handled in the group. To avoid breaking the compatibility, the settings for this nodes are given through the slave.xxxx parameters in values.yaml

9.0.0

The metrics exporter has been changed from a separate deployment to a sidecar container, due to the latest changes in the Redis exporter code. Check the official page for more information. The metrics container image was changed from oliver006/redis_exporter to bitnami/redis-exporter (Bitnami's maintained package of oliver006/redis_exporter).

7.0.0

In order to improve the performance in case of slave failure, we added persistence to the read-only slaves. That means that we moved from Deployment to StatefulSets. This should not affect upgrades from previous versions of the chart, as the deployments did not contain any persistence at all.

This version also allows enabling Redis Sentinel containers inside of the Redis Pods (feature disabled by default). In case the master crashes, a new Redis node will be elected as master. In order to query the current master (no redis master service is exposed), you need to query first the Sentinel cluster. Find more information in this section.